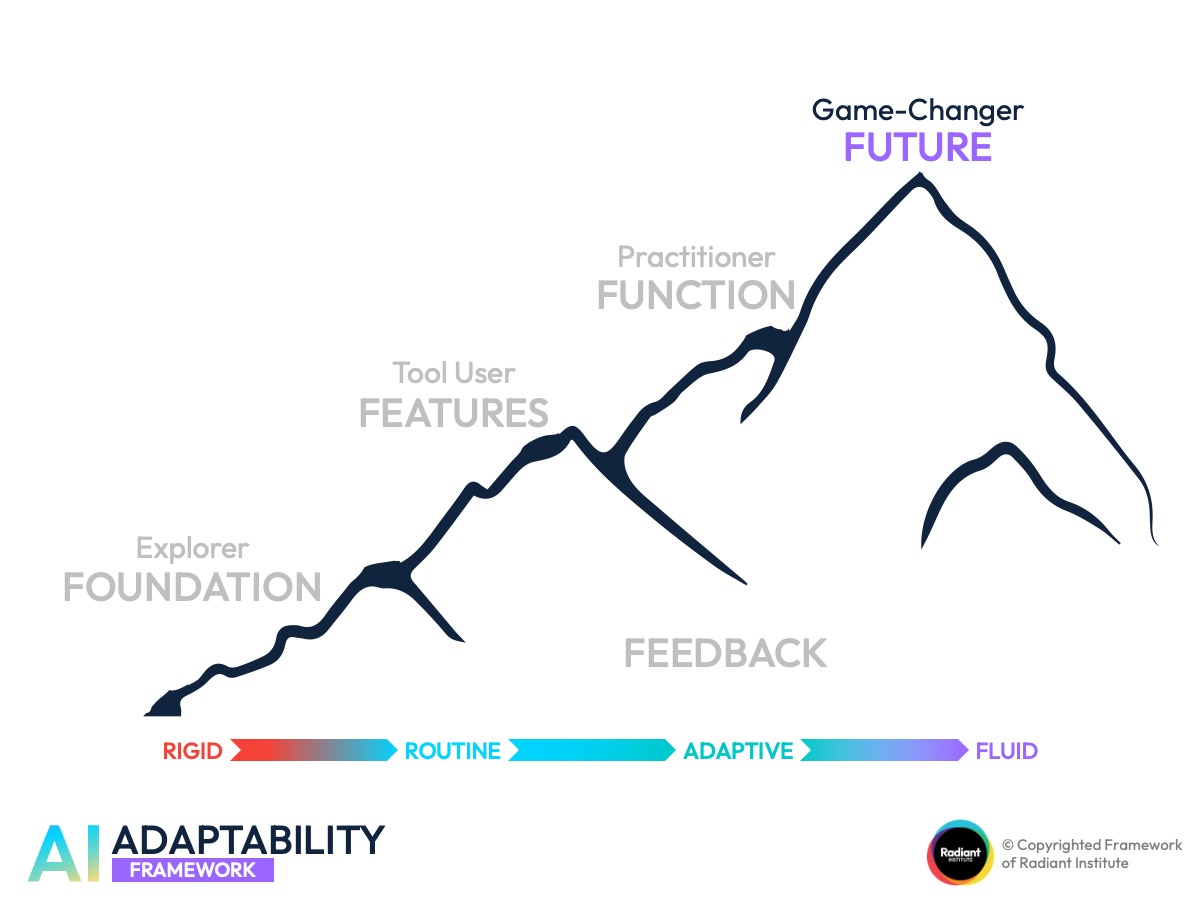

The AI Adaptability Framework

The AI capability framework that moves your people from awareness to adaptability.

-

Work Faster

Work Faster

-

Think Sharper

Think Sharper

-

Learn Smarter

Learn Smarter

Most organisations stop at access.

They roll out tools, run awareness sessions, and assume the job is done.

But access is not application. And activity is not fluency.

The AI Adaptability Framework helps leaders see where their teams really stand and what it takes to close the gap.

Why this AI capability framework exists

The problem is not whether your people have AI.

It is whether they can adapt with it.

Most AI rollouts stall not because people lack access, but because organisations confuse exposure with capability.

Here is what typically happens after an AI rollout:

The Knowledge Myth:

“We ran the awareness session. Everyone knows about AI now.”

But knowing AI exists is not the same as knowing how to use it. Awareness without application changes nothing.

The Access Myth:

“Everyone has Copilot/ChatGPT/Gemini. We are covered.”

But having a login is not the same as having a workflow. Access without integration is just unused potential.

The Activity Myth:

“Our teams are already using AI every day.”

But are they using it meaningfully? Or are they copying prompts, clicking buttons, and repeating the same rigid steps? Activity without understanding is still shallow.

These three myths explain why many organisations feel stuck even after investing in AI tools and training. The real issue is not whether people have seen the tool. It is whether they can adapt, transfer, and generate meaningful outcomes with it.

That is what the AI Adaptability Framework was built to diagnose and develop.

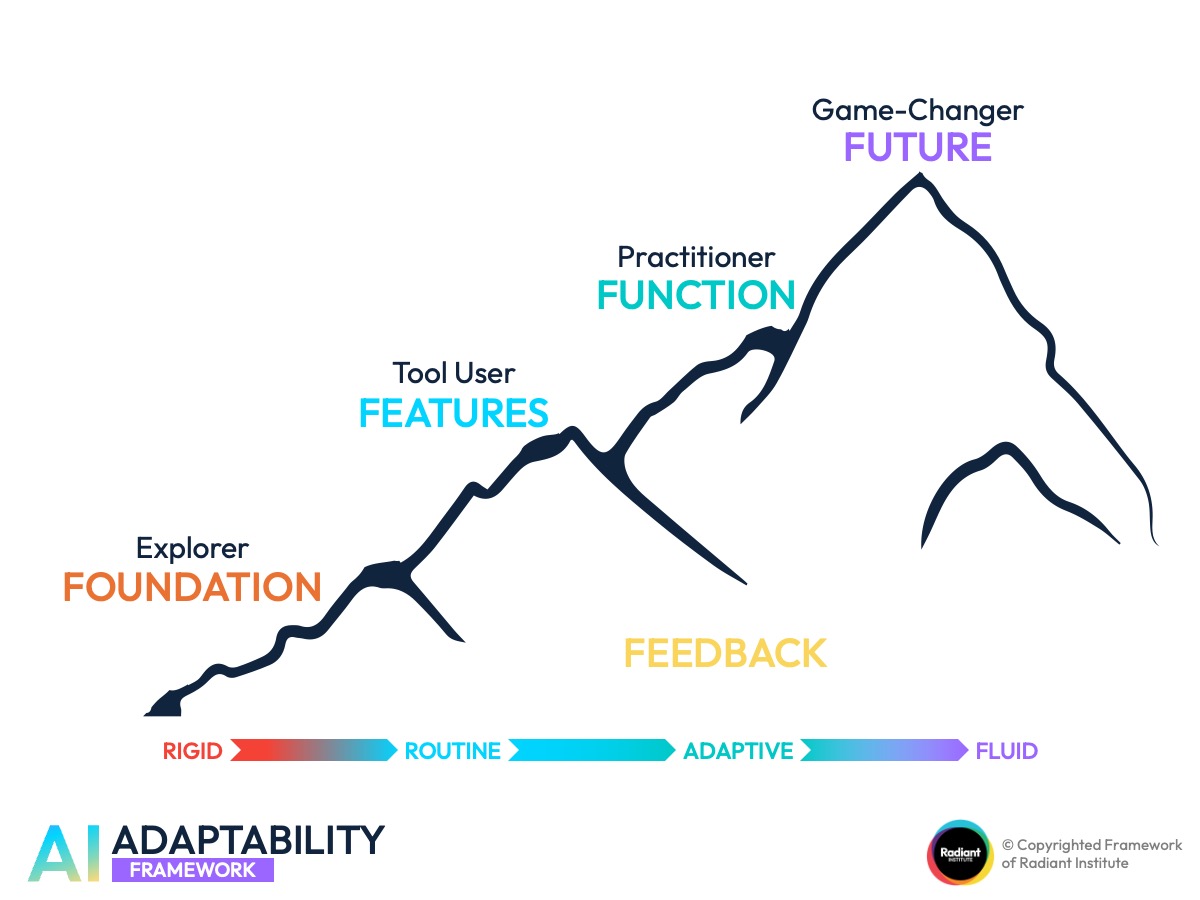

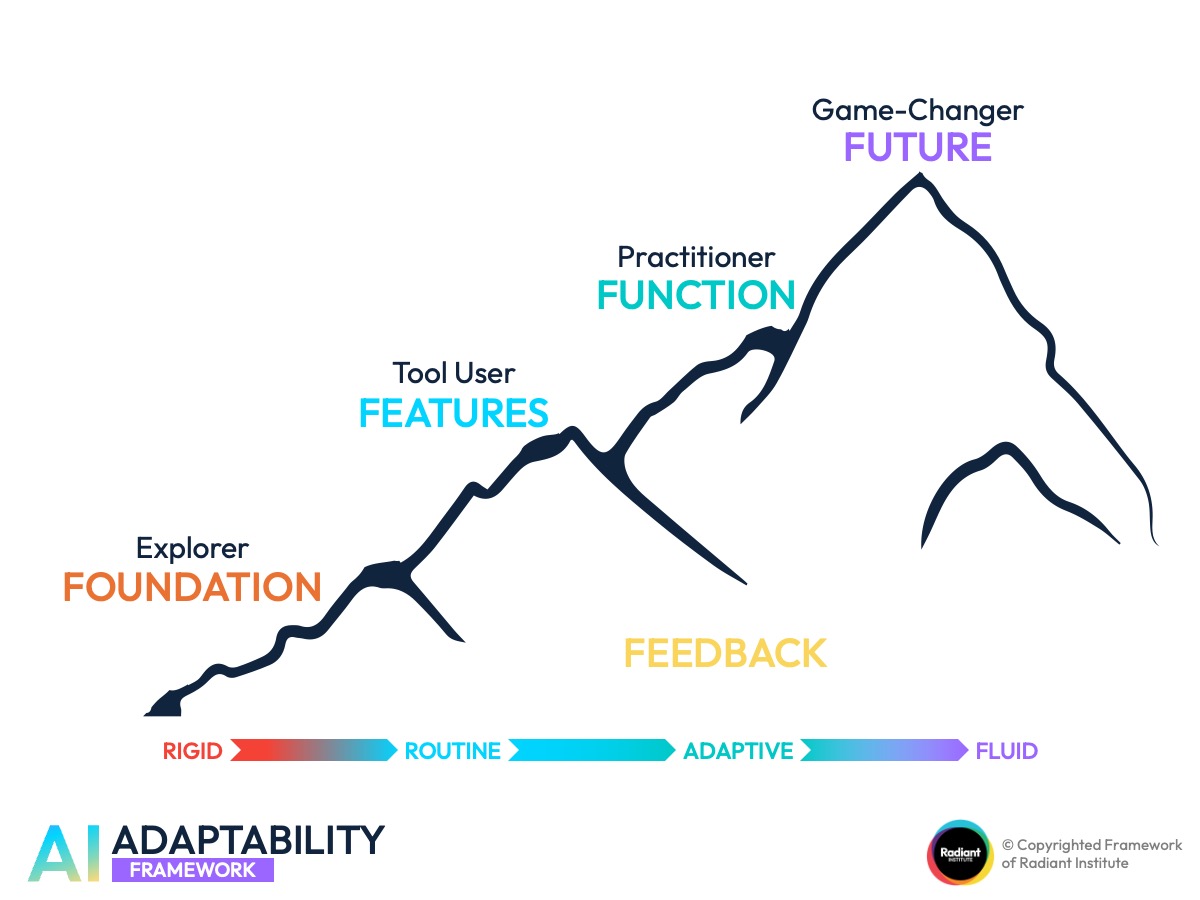

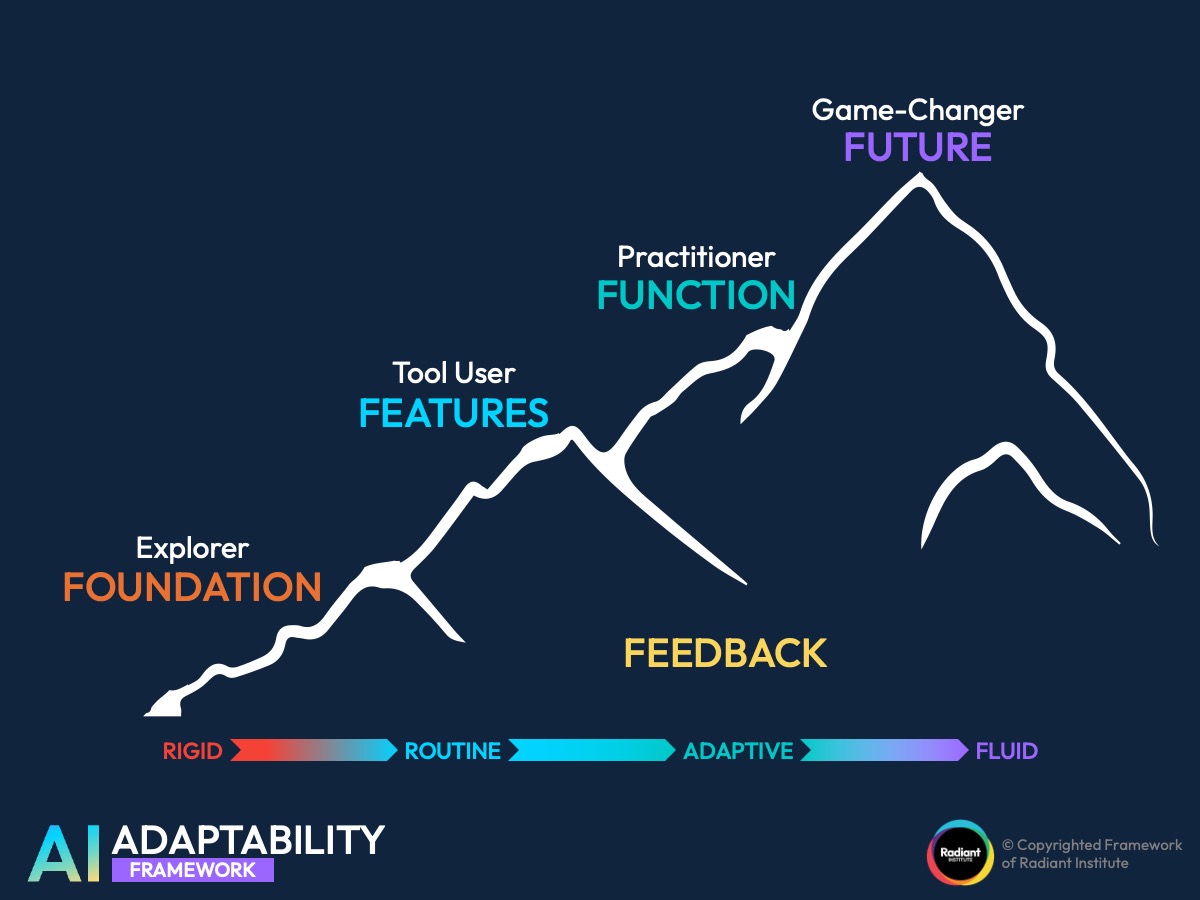

The Framework at a Glance

A four-stage progression from rigid awareness to fluid reinvention.

The AI Adaptability Framework maps AI capability across four levels, each beginning with F: Foundation, Features, Function, and Future.

Read it as a progression from left to right. Each level represents a deeper, more transferable form of capability.

A feedback loop from Future back to Foundation keeps the model cyclical, not static.

Explorer

FOUNDATION

Adaptability Level: Rigid

The starting point. The person may know AI exists, have attended a session, or even have access to the tool. But their capability falls into one of two patterns:

Pattern #1: Aware but not acting.

They know AI is important. They may have attended an awareness session or read about it. But nothing changes in practice.

- “I know AI can help.”

- “I attended the session.”

- “I should probably use it more.”

Pattern #2: Acting without understanding.

They are using the tool, but only through memorised routines, copied prompts, or rigid steps. They know which button to click. They can repeat a workflow. But they get stuck the moment the tool changes, the interface changes, or the context changes.

This is one of the most important distinctions in the framework: a person can still be at Foundation even if they are producing some results, if those results come from rote repetition rather than genuine comprehension.

Think of it like fitness. Many people know they should exercise. Many people know they should eat better. That does not mean they are actually living it.

The same applies to AI. Knowing is not the same as doing, and doing without understanding is not the same as mastery.

Tool User

FEATURES

Adaptability Level: Routine

The person knows what the tool can do. They can name features, describe use cases, and navigate a familiar interface.

They know ChatGPT can summarise, rewrite, brainstorm, and analyse. They know Copilot, Gemini, or Claude each have certain built-in functions.

But they are still bound to one tool, one set of prompts, or one known workflow.

The limitation: they know the tool’s features, but cannot yet flex them across contexts.

Signs someone is still at Features:

- They work well only in one tool.

- They struggle when switching platforms.

- They depend on familiar prompts or known buttons.

- They can explain the feature, but not redesign a workflow around it.

This is real progress, but it is still routine.

Their capability holds up only in known environments with known interfaces.

Practitioner

FUNCTION

Adaptability Level: Adaptive

This is where practical fluency begins. The person connects AI to their actual role, adapts known features to solve real problems, and exercises judgment in execution.

The shift is clear: from “What can the tool do?” to “How do I use this in my actual work?”

At this level:

- A manager uses AI not just to summarise reports, but to structure a weekly decision memo.

- A customer service lead uses AI not just to analyse tickets, but to identify likely spike categories and prep team actions.

- A key account manager uses AI not just to draft an email, but to prep negotiation angles based on meeting notes and account behaviour.

The key distinction from Features: a Tool User knows what the tool can do. A Practitioner knows how to use it for work.

They can adjust within familiar contexts, apply judgment, and connect AI use to real job outcomes.

Game-Changer

FUTURE

Adaptability Level: Fluid

The most strategic level. The person thinks beyond current tasks and reimagines what workflows, decisions, and outcomes could look like. They use AI as a lever for adaptability, not just efficiency.

Instead of asking “How can AI help me do this faster?”, they ask:

- “How can AI help us forecast issues before they happen?”

- “How can AI surface patterns that change how we allocate resources?”

- “What should this workflow become now that AI exists?”

This is where transferability becomes the defining signal. A Game-Changer’s capability survives tool changes, interface changes, and context changes.

They can move from ChatGPT to Claude, from one company’s setup to another, and still preserve the logic of the workflow. Their success is not bound to any single environment.

The distinction between Adaptive and Fluid matters here. A Practitioner adjusts within familiar contexts. A Game-Changer transfers and evolves across unfamiliar ones. That is the difference between competence and true adaptability.

Common Misconceptions this Framework Clears Up

“We already did the training.”

Training creates awareness. It does not guarantee application. A person who attended a session but cannot adapt what they learned is still at Foundation.

“Our people already use AI.”

Using AI is not the same as using it well. If the usage depends on memorised prompts or a single tool, it may still be routine, not adaptive.

“AI literacy is enough.”

There is a critical difference between AI fluency and AI literacy. Literacy means you have seen it. Fluency means you can use it, transfer it, and adapt it across contexts. Most organisations have literacy. Few have fluency.

“Switching tools should be easy.”

If a person’s results collapse when they move from ChatGPT to Claude, or from one interface to another, their capability is shallower than it appears. Transferability is the real test.

“More features means more capability.”

Knowing more buttons does not mean deeper mastery. A Tool User who cannot apply features to their own workflow is still stuck at the Features level.

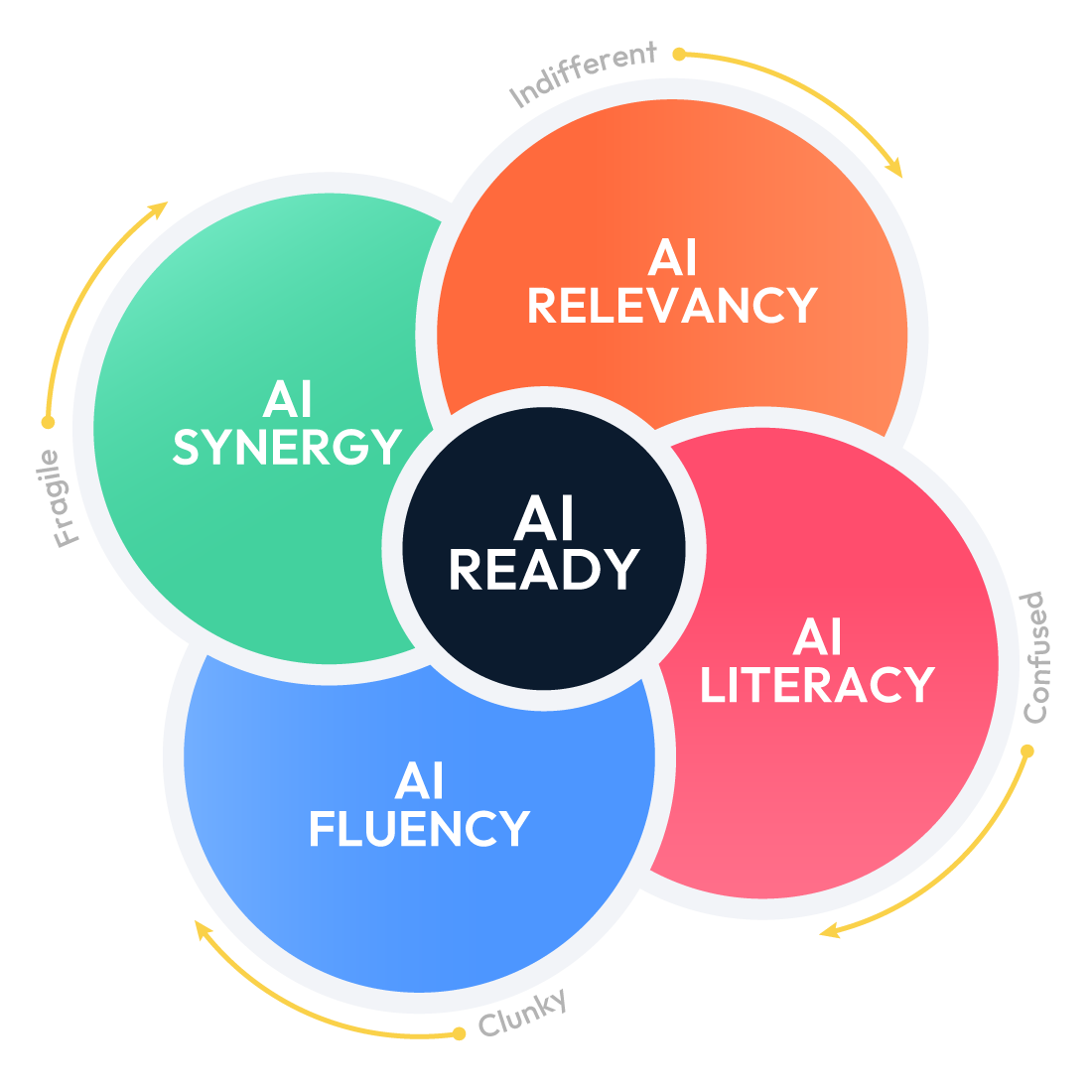

AI Fluency vs. AI Literacy

Why knowing about AI is not the same as knowing how to use it

Why the gap between “knowing about AI” and “using AI well” is wider than most leaders think.

Many organisations believe they have achieved AI readiness because their teams have completed introductory training or have tool access. But there is a meaningful difference between literacy and fluency.

AI Literacy means the person has been exposed to AI concepts, tools, or features. They can recognise what AI is and describe what it does.

AI Fluency means the person can apply AI meaningfully in context, transfer their skills across tools, and adapt when the environment changes.

The AI Adaptability Framework helps leaders stop measuring exposure and start measuring capability. It turns the question from “Have they seen it?” to “Can they use it, adapt it, and evolve with it?”

4 Ways to Use This Framework In Your Organization

Strategic application guidance for leaders, L&D teams, and consultants.

RECOMENDATION 1

Diagnose your Team’s Real AI Capability Level

How do we know where our people actually stand, beyond what they tell us?

Most self-assessments overestimate capability. People confuse having access with having fluency. This framework gives you a shared language to cut through that gap.

Instead of asking “Do you use AI?”, use scenario-based questions that reveal whether someone can describe the tool (Features), apply it to their role (Function), or rethink the workflow entirely (Future). The answers expose the real level, not the perceived one.

This makes AI readiness assessment practical, not theoretical. You move from assumptions to evidence.

RECOMMENDATION 2

Design the Right Intervention for Each Level

Where are the real gaps, and what kind of support does each group actually need?

Not every gap is a training gap. A team stuck at Foundation may need orientation. A team at Features may need contextualised application workshops. A team at Function may need consulting on workflow redesign, not another course.

The framework prevents the common mistake of treating every AI challenge as a training problem. It helps you match the intervention to the actual capability level.

RECOMMENDATION 3

Measure Progress Beyond Tool Adoption

How do we track whether AI training for teams is actually working?

Adoption metrics (logins, usage frequency, prompt volume) only tell you about activity. They do not tell you about capability.

This framework adds a second layer: are people moving from rigid to routine? From routine to adaptive? From adaptive to fluid?

That progression is a better signal of return on investment than any dashboard of tool usage.

RECOMMENDATION 4

Future-Proof your AI Capability Strategy

How do we build teams that stay effective even when tools, interfaces, and workflows change?

The highest level of this framework is not about mastering one tool. It is about developing transferability.

Teams that reach Future can move across platforms, adapt to new interfaces, and redesign workflows without starting from scratch.

This is the difference between building a team that uses AI today and building a team that stays capable tomorrow.

EXAMPLE 1

Context: A 12-person marketing team had been using AI tools for six months. Leadership assumed they were well ahead of the curve.

Net-Effect: From scattered tool usage to structured, role-based AI workflows.

What changed:

- A diagnostic revealed that 9 of 12 team members were operating at Features. They knew the tools but were copying the same prompts and struggling when switching between platforms.

- The real gap was not access or awareness. It was the jump from tool familiarity to practical application in their specific marketing workflows.

- After targeted Function-level support, the team began using AI to draft campaign briefs tailored to regional segments, analyse competitor messaging patterns, and prep quarterly planning documents.

EXAMPLE 2

Context: A 40-person operations team had rolled out AI tools across three functions: procurement, fleet scheduling, and compliance reporting. Usage numbers looked healthy.

Net-Effect: From high activity with low impact to redesigned decision workflows.

What changed:

- Usage data showed frequent activity, but a scenario-based diagnostic revealed most staff were at Foundation (Type 2): repeating memorised steps without understanding why.

- The compliance sub-team had one member already at Function, who was quietly adapting AI to flag regulatory risks before audits. That approach was invisible to leadership until the framework surfaced it.

- By reframing the need from “more training” to “capability transfer and workflow redesign,” the team moved toward Future-level thinking: using AI not just to fill in reports, but to reshape how decisions about routes, vendors, and risk were made.

Want to see this framework in action?

See how we use the AI Adaptability Framework to build shared language, diagnose real capability, and design interventions that move teams forward.

We apply the AI Adaptability Framework across our programmes to help organisations move beyond surface-level AI adoption. Whether the starting point is awareness, tool rollout, or workflow redesign, the framework ensures the intervention matches the real capability gap, not the assumed one.

The result: teams that do not just use AI, but adapt with it.

Frequently Asked Questions

Common questions about the AI Adaptability Framework, AI capability levels, and how organisations build real AI fluency.

What is the AI Adaptability Framework?

The AI Adaptability Framework is an AI capability framework developed by Radiant Institute. It maps AI capability across four progressive levels: Foundation, Features, Function, and Future. Each level represents a deeper and more transferable form of AI mastery, from basic awareness to strategic reinvention.

What are the four levels?

Foundation (Explorer), Features (Tool User), Function (Practitioner), and Future (Game-Changer). Each level increases in flexibility and adaptability, moving from rigid awareness to fluid reinvention.

How is this different from an AI maturity model?

Most maturity models focus on organisational infrastructure: tools deployed, policies written, budgets allocated. This framework focuses on people. It measures whether individuals and teams can actually apply, adapt, and transfer their AI skills, not just whether the tools are in place.

What is the difference between AI fluency and AI literacy?

AI literacy means a person has been exposed to AI concepts and can recognise what the tools do. AI fluency means they can apply AI in context, adapt across tools, and generate meaningful outcomes. The framework helps organisations move from literacy to fluency.

Can someone be at Foundation even if they use AI regularly?

Yes. If their usage depends on memorised prompts, copied templates, or rigid routines, they may still be at Foundation. The test is not whether they use the tool, but whether they understand why it works and can adapt when conditions change.

How do we assess where our team sits?

Scenario-based questions are more reliable than self-assessment. Ask people to describe how they would use AI to solve a role-specific problem. The depth and adaptability of their answer reveals their real level. This is the basis of a practical AI readiness assessment.

Is this framework tool-specific?

No. The framework is deliberately tool-flexible. It applies whether your organisation uses ChatGPT, Claude, Copilot, Gemini, or any other AI platform. The focus is on capability, not on any single product.

How does Radiant Institute use this framework?

Radiant Institute uses the AI Adaptability Framework as a diagnostic and developmental tool across its AI training for teams programmes, consulting engagements, and capability-building workshops. It helps organisations identify real capability gaps and design the right interventions.