The 7 Drivers of AI Effectiveness

A practical AI effectiveness framework that helps leaders measure, manage, and scale how their teams actually work with AI.

-

Work Faster

Work Faster

-

Think Sharper

Think Sharper

-

Learn Smarter

Learn Smarter

Every organisation wants AI to make work better. But most struggle to define what “better” looks like beyond speed and cost savings. The 7 Drivers of AI Effectiveness gives leaders a shared language and a practical lens for evaluating how well AI is really working across their teams.

Why This AI Effectiveness Framework Exists

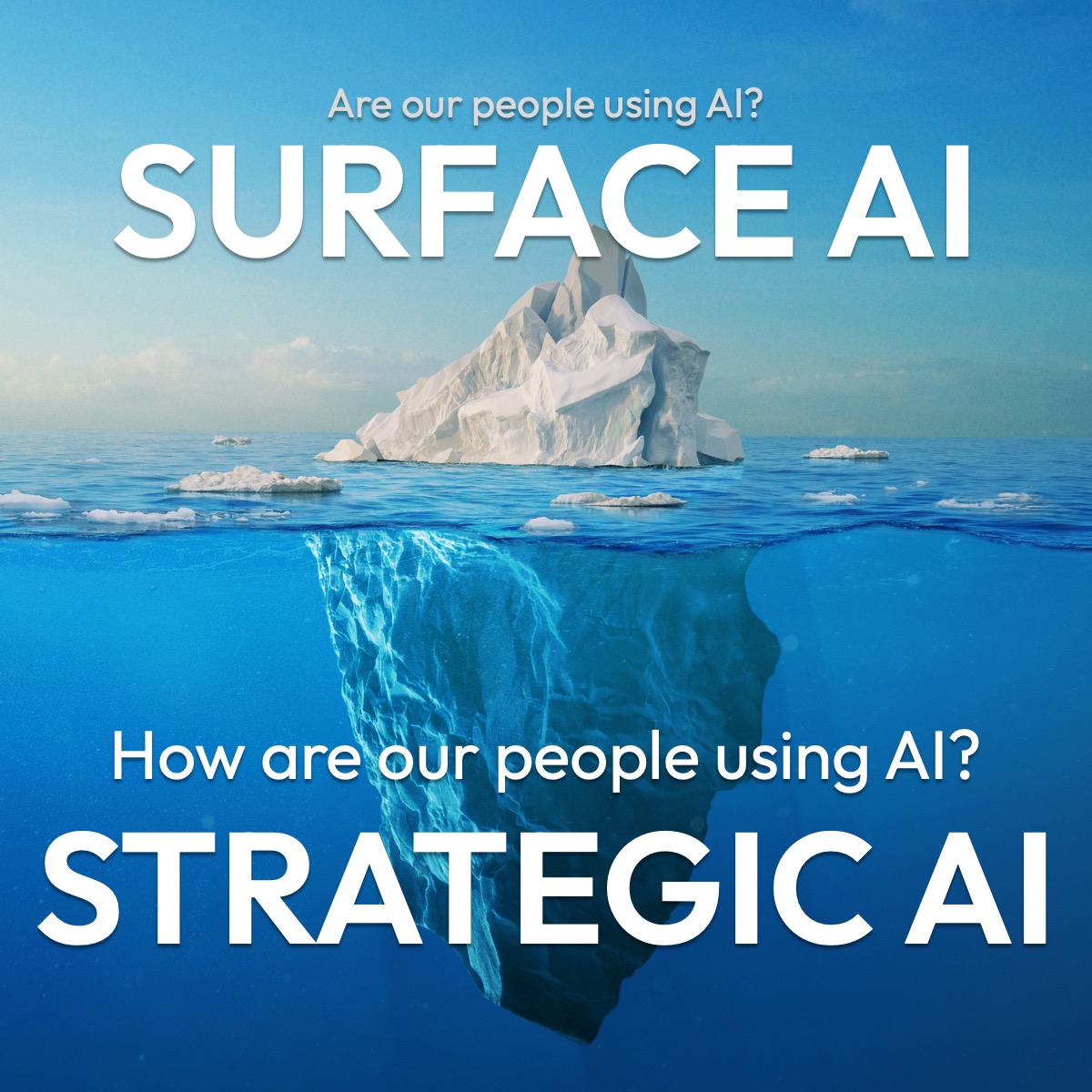

Most organisations are measuring AI adoption. Very few are measuring AI effectiveness.

Think of AI adoption like an iceberg.

Above the waterline sits the surface question most organisations ask: “Are our people using AI?”

That question tracks input — logins, prompts, license counts.

Below the waterline sits the strategic question: “How are our people using AI?”

That question looks at output — what the work looks like, how decisions are made, and whether the results are spreading.

The gap between Surface AI and Strategic AI is widening. Leaders see tool usage going up, but the expected gains in quality, speed, and capability are not following.

Three common failure modes explain why:

Measurement confusion.

Organisations track logins, prompts, and licence usage. None of these tell you whether AI is actually improving the work. Activity is not effectiveness.

Skill assumptions.

Teams are given tools and expected to figure them out. When output quality stays flat or drops, the tools get blamed. In reality, people were never shown what “good” looks like with AI.

Scaling blindness.

Pockets of excellence exist, but they stay local. One team member finds a brilliant workflow, and nobody else ever hears about it. The organisation keeps reinventing the wheel.

These patterns repeat across industries and functions. The 7 Drivers of AI Effectiveness framework exists to give leaders a structured way to see where AI is working, where it is not, and what to do about it.

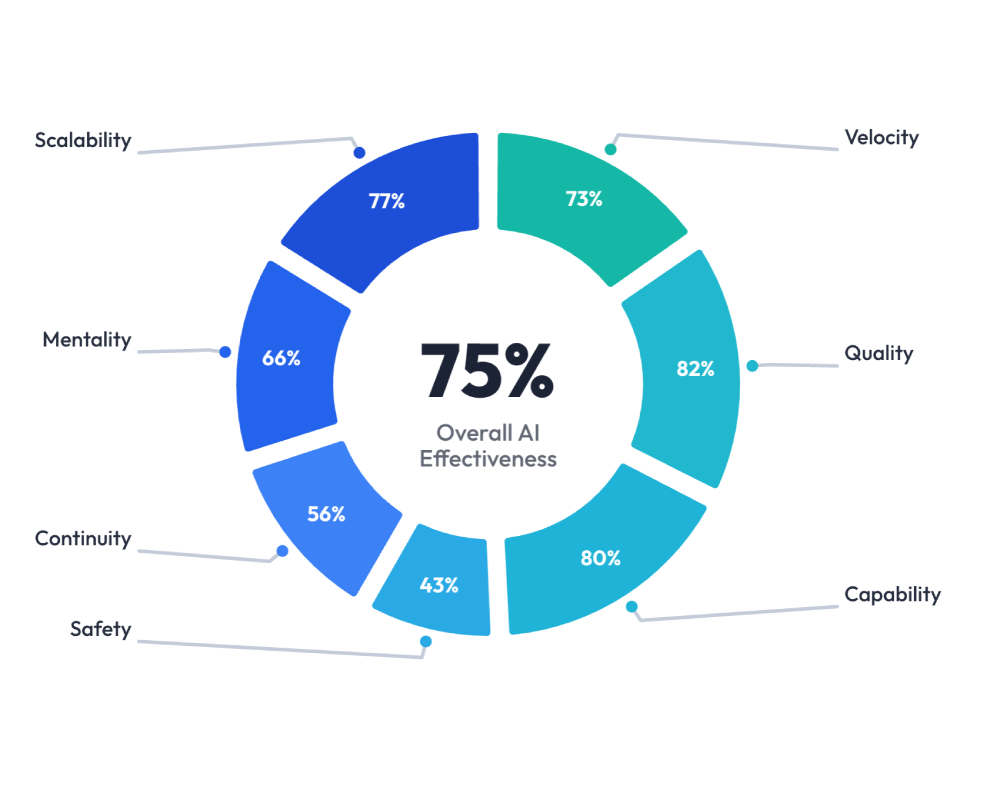

The Framework at a Glance

Seven drivers that together define how effectively a team works with AI.

VELOCITY

How quickly does AI help work move from “started” to “ready to use” in day-to-day tasks?

QUALITY

How often is AI-assisted work accurate, relevant, and usable with minimal correction?

CAPABILITY

To what extent does AI expand what people can do, not just how fast they can do it?

SAFETY

How confidently and responsibly is AI used without introducing risk or misuse?

CONTINUITY

How consistently is AI used across time, tasks, and changing priorities?

MENTALITY

How open, confident, and intentional is the mindset toward working with AI?

SCALABILITY

How easily can effective AI practices spread across people, teams, and workflows?

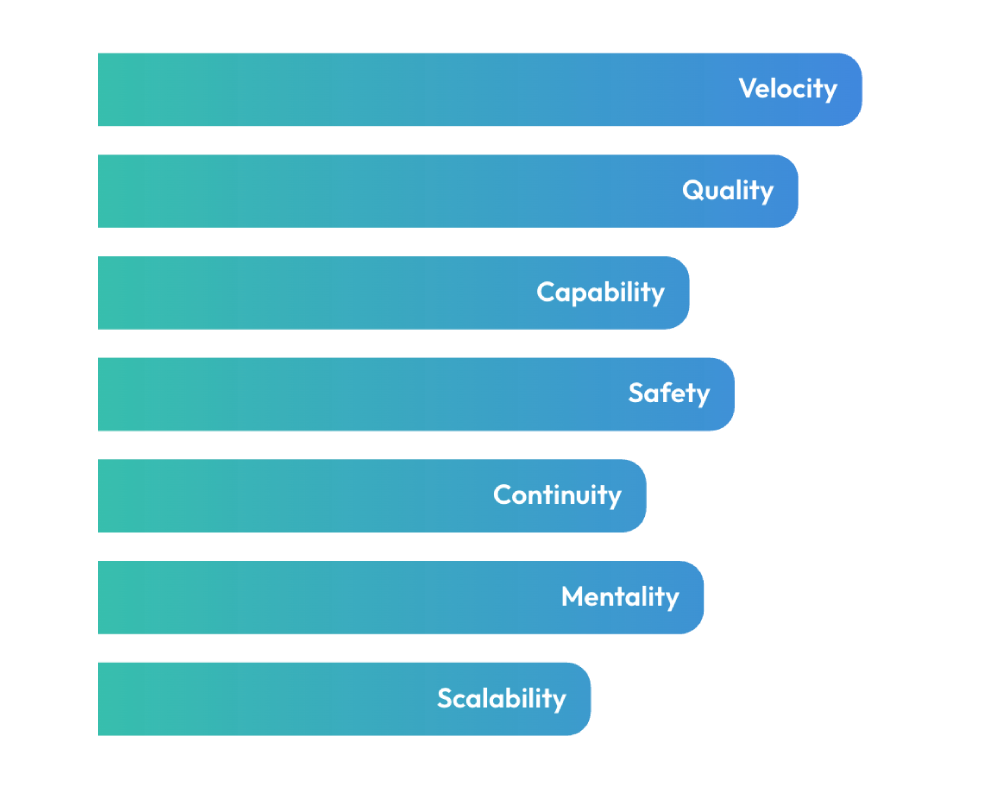

The 7 Drivers are organised into two tiers.

The top four, Velocity, Quality, Capability, and Safety, are output-facing. They describe what the work looks like when AI is used well.

The bottom three, Continuity, Mentality, and Scalability, are system-facing. They describe how sustainable and spreadable those results are.

Each driver is framed as a question that any manager can answer about their team:

VELOCITY

How quickly work moves from first prompt to an actionable outcome, with minimal drag or revisions. High work velocity means fewer loops, fewer “still refining” delays, and faster, cleaner progress to a clear decision.

HIGH SCORER

- First drafts arrive much faster, and the team spends more time deciding and less time typing.

- Turnaround time for common tasks drops, with no visible drop in quality.

- People arrive at meetings with clearer options or summaries prepared by AI.

- Managers notice that “things move” faster from idea to decision, not just from idea to document.

LOW SCORER

- Work that “used AI” still takes as long as before, sometimes longer.

- There are many rounds of back and forth, yet people still say “still refining” for days.

- Deadlines are not moving, even though tool usage is high.

- Managers feel that AI adds noise and extra drafts, not momentum.

QUALITY

How often AI-aided work comes out clear, structured, and genuinely useful instead of generic “AI slop” long, vague output that adds work for others. When quality is high, people only tweak details – they don’t have to rewrite the thinking.

HIGH SCORER

- AI-assisted work is clear, specific to your context, and easy to act on.

- Others mainly tweak details, examples, or formatting rather than reworking the logic.

- Stakeholders comment that work is “well structured” or “easier to understand.”

- AI is associated with sharper thinking and better decisions, not just faster typing.

LOW SCORER

- AI-assisted work feels generic, overlong, or off-brief, and “sounds like AI.”

- Stakeholders often ask for clarification or send work back because it is unclear or shallow.

- Managers and peers frequently rewrite the thinking, not just the wording.

- People quietly stop trusting AI-generated content and redo it themselves.

CAPABILITY

How far AI helps someone perform tasks they weren’t previously skilled or trained for, and still deliver solid work. High capability means people can produce results, solve problems, and create impact beyond their original skill level, because AI fills the gap.

HIGH SCORER

- People can now handle tasks they were not originally trained for, with AI support.

- Juniors or non-specialists can produce decent analysis, drafts, or content that others can use.

- Absences hurt less, because AI plus simple guidance allows others to step in.

- Leaders can point to real examples of “we could not do this before, now we can.”

LOW SCORER

- People still avoid tasks outside their comfort zone, even when AI could help.

- Work that needs writing, analysis, or synthesis is still routed to the same few “strong” people.

- When someone is away, only a small subset of their tasks can realistically be covered.

- Job scopes feel narrow, and AI does not feature in development or upskilling conversations.

SAFETY

How consistently someone uses AI in ways that protect data and maintain trust, while making it clear what came from AI. High safety shows up in sensible data choices, use of approved tools, and basic provenance like sources, models, and limits.

HIGH SCORER

- People pause before pasting, and ask, “Should this data go into this tool?”

- Approved tools and safer setups are used for real work, not just pilots.

- AI-shaped work is transparent: people are open about AI’s role, limits, and source quality.

- Risk and trust are seen as shared responsibilities, not blockers to “getting things done.”

LOW SCORER

- People paste internal or customer details into any AI tool that is convenient.

- There is confusion about what is allowed, and “we are probably fine” is the common attitude.

- AI-assisted work is shared without any clarity on what came from AI or how it was checked.

- Risk, legal, or IT teams hear about AI use only when there is a problem.

CONTINUITY

How reliably work continues when AI tools, models, or access change, slow down, or fail. High continuity means people know alternatives, can switch approaches without drama, and don’t let outages or tool changes stall important tasks.

HIGH SCORER

- People quickly switch to alternatives or adjust their approach when tools change or fail.

- Critical workflows have a “with AI” and “without AI” path, both understood by the team.

- Tool changes cause irritation, but not major disruption to delivery.

- Some individuals even help others adapt, suggesting workarounds and options.

LOW SCORER

- Work grinds to a halt when a favourite AI tool is down, blocked, or changed.

- People say “I cannot do this until ChatGPT/Gemini/Copilot is back.”

- Small tool changes create big frustration and delays.

- Managers feel that dependence on specific tools has increased fragility, not resilience.

MENTALITY

How naturally someone brings AI into their workflow, using it throughout the task rather than only at the end for polish. Strong mentality shows up as “AI-first, but not AI-only” in day-to-day work.

HIGH SCORER

- People naturally ask, “How can AI help here?” at the start of tasks, not just at the end.

- AI is used across the task: scoping, research, drafting, checking, learning.

- Teams share prompts or ways of working in chats and huddles without being told.

- AI is described as “part of how we work now,” not as a side experiment.

LOW SCORER

- AI shows up only at the end, mainly for “make this sound nicer” or grammar.

- People say things like “I will use AI if I get stuck” rather than “I will start with AI.”

- Most work is still scoped, planned, and structured without AI input.

- In meetings, AI is talked about as a tool for a few use cases, not as a normal part of how work gets done.

SCALABILITY

How easily effective AI-powered ways of working become simple habits and everyday culture, with prompts, templates, and workflows captured and shared so others can reuse them instead of reinventing them or keeping them locked in one team.

HIGH SCORER

- Good AI prompts and workflows are captured as simple, reusable habits.

- Teams share “how we do this with AI” in channels, playbooks, or SOP steps.

- Successful patterns spread across teams or locations instead of staying local.

- New joiners get exposed to working AI examples early, not months later.

LOW SCORER

- AI wins stay as personal hacks in someone’s head or browser history.

- Different teams solve the same AI problems from scratch, with little sharing.

- There is no simple place to find good prompts, workflows, or examples.

- New joiners have to learn AI use on their own, person by person.

Common Misconceptions this Framework Clears Up

“AI effectiveness is about prompt quality.”

Prompting matters, but it is only one input. A team can write excellent prompts and still score low on Safety, Continuity, or Scalability. Effectiveness is a system, not a skill.

“If people are using AI, we are getting value.”

Usage rates say nothing about whether the outputs are good, safe, or spreading. High adoption with low Quality or low Safety can actively hurt an organisation.

“AI effectiveness is an IT or innovation team problem.”

Every driver in this framework is observable by a line manager. Velocity, Quality, Mentality — these show up in daily work, not in dashboards. Effectiveness is a management conversation, not a technology one.

“Speed is the main benefit of AI.”

Velocity is one of seven drivers. Organisations that optimise only for speed often miss Capability (people doing new things), Safety (doing them responsibly), and Scalability (making sure the wins spread).

“Once people are trained, they are effective.”

Training builds awareness. Effectiveness requires practice, feedback, and reinforcement over time. That is why Mentality and Continuity exist as separate drivers. Knowing how is not the same as doing it consistently.

4+1 Different Ways to Use This Framework In Your Organization

Practical application guidance for leaders, managers, and L&D teams.

RECOMENDATION 1

Team diagnostic

Where are we strong, and where are we losing value?

Use the seven drivers as a structured lens for assessing your team’s current state. Rate each driver as low, developing, or strong based on observable behaviours, not self-reported confidence.

This gives you a map of where AI is genuinely helping and where effort is being wasted. Most teams discover that one or two drivers are quietly undermining the value of the others.

RECOMMENDATION 2

Manager conversation tool

How do we talk about AI performance without making it about the tools?

Each driver comes with clear indicators of what “low” and “high” look like. Use these as talking points in one-on-ones, team retrospectives, or quarterly reviews.

The language is deliberately non-technical. A manager does not need to understand models or APIs. They need to recognise whether their team’s AI-assisted work is fast, clear, safe, and spreading.

RECOMMENDATION 3

Program design anchor

How do we build AI training that actually moves the needle?

Instead of designing programmes around tools or prompting techniques, map your learning outcomes to the seven drivers.

If your team scores low on Capability, the programme should focus on expanding what people can do with AI, not just making them faster at what they already do.

This stops L&D teams from defaulting to generic “AI 101” content.

RECOMMENDATION 4

Scaling playbook

How do we take what is working in one team and spread it across the organisation?

Use the Scalability driver as a forcing function.

For every AI workflow that works well, ask: is this captured somewhere others can find it? Is it simple enough to reuse? Are new joiners exposed to it early?

Most organisations have more internal AI expertise than they realise. The problem is access, not ability.

RECOMMENDATION 5

Invest 7 minutes for this assessment

AI

EFFECTIVENESS

SCORECARD

A Leadership-Level Assessment to Help Managers Understand Their Team’s AI Strengths, using the 7 Drivers of AI Effectiveness, in under 7 minutes!

About the time it takes to make a proper cup of coffee.

Imagine driving a car with a broken dashboard.

You wouldn’t know how fast you’re going, whether the airbag works, or how much fuel you have left.

That’s an uncomfortable way to drive.

Yet this is how many leaders are navigating AI today.

Teams say they’re “using AI”, but leaders lack visibility into how, how often, and how effectively it’s actually being used.

The AI Effectiveness Scorecard makes the invisible visible, so leaders can see what’s really happening across their teams, and decide what to do next.

And you can get it done in under 7 minutes!

What You Will Get After 7 Minutes?

Your Team’s Combined Score

A single, leadership-friendly score showing your overall AI effectiveness.

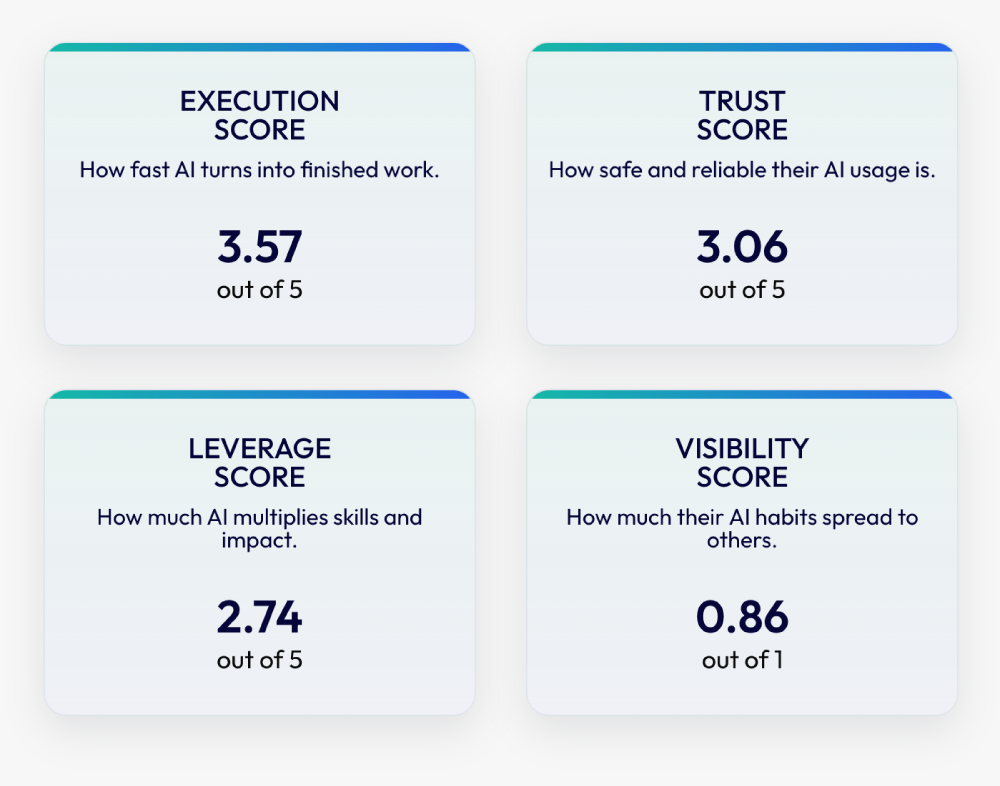

Your Key AI Performance Scores

Four focused scores to show how fast, safe, scalable, and visible AI really is inside your team.

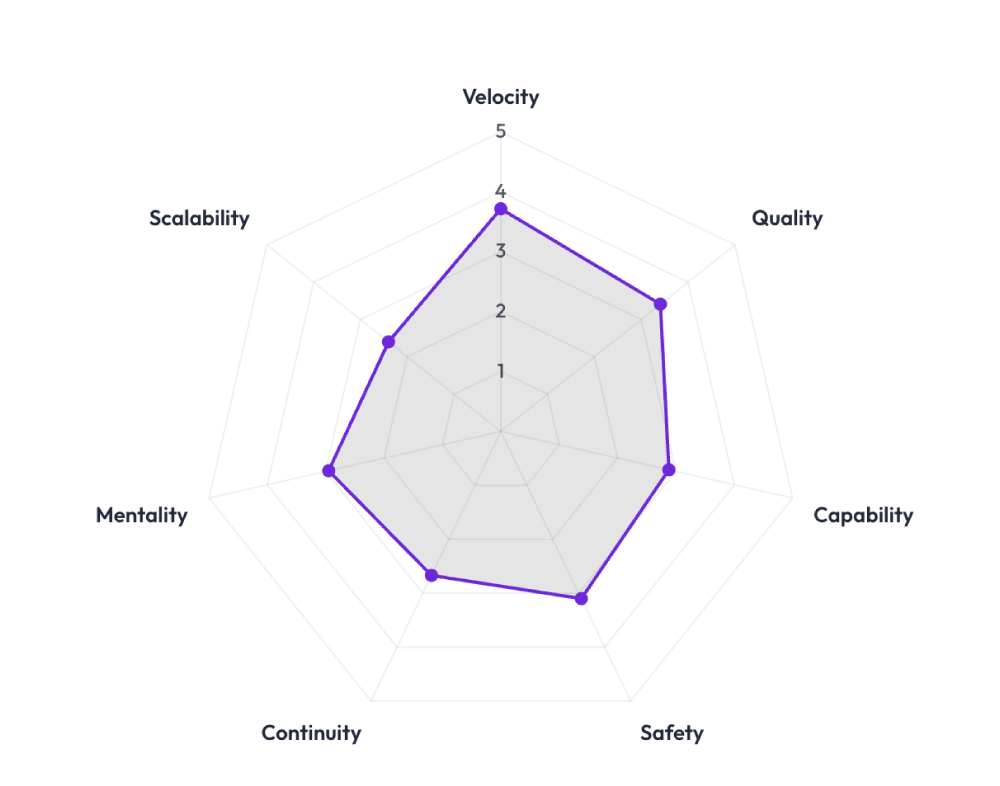

Your Team’s AI Signature

See how your team performs across the 7 workplace drivers that shape real-world AI results.

Strengths & Gaps by Driver

Compare each driver side-by-side and instantly spot where AI is helping most, and where support is needed.

Get your team’s AI Effectiveness Scorecard today, so everyone can Work Faster, Think Sharper and Learn Better with AI!

Frequently Asked Questions

Common questions about the AI effectiveness framework and how it applies to team AI performance.

What is the 7 Drivers of AI Effectiveness framework?

It is a practical framework that defines seven observable drivers of how well people and teams work with AI. It covers output quality, speed, capability expansion, safe use, consistency, mindset, and the ability to scale effective practices. It is designed for managers and leaders, not technologists.

How is AI effectiveness different from AI adoption?

AI adoption measures whether people are using AI tools. AI effectiveness measures whether that usage is producing better work, safer decisions, and scalable practices. High adoption with low effectiveness is common and costly.

Can this framework be used across different industries?

Yes. The seven drivers are role-agnostic and tool-agnostic. They describe behaviours and outcomes, not specific technologies. Organisations in financial services, healthcare, education, manufacturing, and professional services have all used this lens.

Do we need to score high on all seven drivers?

Not necessarily at the same time. The framework helps you prioritise. A team that scores low on Safety should address that before pushing for Scalability. Context determines which drivers matter most right now.

How does this relate to team AI performance?

Team AI performance is the combined result of all seven drivers working together. If Velocity is high but Quality is low, the team is producing fast but unreliable work. The framework helps leaders see the full picture instead of optimising for a single metric.

Is this only for large organisations?

No. Teams as small as five people benefit from the shared language the framework provides. The drivers scale up or down. What changes is the complexity of measurement, not the relevance of the questions.

What is AI literacy and how does it connect to effectiveness?

AI literacy is the baseline understanding of what AI can and cannot do. It is necessary but not sufficient. The 7 Drivers framework goes beyond literacy by measuring whether that understanding translates into better, safer, and more scalable work.

How do we get started with this framework?

Start with an honest team-level assessment. For each driver, ask: are we low, developing, or strong? Use the observable indicators provided with each driver. That conversation alone often surfaces insights that months of tool usage data cannot.